THE AGENTIC INFERENCE CASCADE: FORCED COMMITMENT, SEQUENTIAL LAYER REPRICING

Trace AMD's TAM revision through each supply chain layer. Read reclassification, not rally.

“Insights age like wine; news ages like milk. The evidence below is a timestamp of a recurring cycle. Observe the mechanism before it repeats.”

1. Eternal Logic

Core Thesis

Each technology deployment cycle generates a sequenced repricing of its supply chain. Capital allocates first to the supply-constrained layer. In the agentic AI phase, that layer is CPU-class inference compute. A positive feedback loop reprices adjacent layers from design through assembly. Application software receives capital recognition last.

Operating Mechanism

AI workload composition determines hardware capital allocation. Training requires GPU-class parallel compute. Agentic inference requires CPU-class sequential reasoning at low latency. As agentic deployment scales, inference volume grows faster than training volume. CPU vendors capture incremental AI capex previously allocated to GPU hardware. Hyperscaler commitments create multi-quarter order visibility. Visibility drives foundry capacity allocation and triggers server DRAM qualification. Memory qualification enables server assembler revenue acceleration. Assembler revenue confirms the capex rationale and generates additional procurement. A positive feedback loop closes without external input.

Systemic Conclusion

The forcing function is procurement lead time. Advanced component supply requires multi-quarter lead times from order to delivery. A buyer who defers commitment cannot recover lost deployment time. This asymmetry is structural. Capital commits early not from optimism but from structural constraint. The cascade does not require demand certainty. It requires only that lead time asymmetry persists.

2. The 2026-05-06 Case Study: Empirical Proof

Trigger

On 2026-05-05 after US market close, AMD released first quarter results. Revenue grew 38% year-over-year and the data center segment grew 57%. AMD revised its server CPU total addressable market growth forecast from approximately 18% to above 35% annually. AMD projected market size exceeding 120 billion USD by end of decade. CEO Lisa Su attributed the revision to agentic AI workloads driving CPU demand above prior estimates. This release initiated a seven-layer repricing cascade within 24 hours.

Transmission

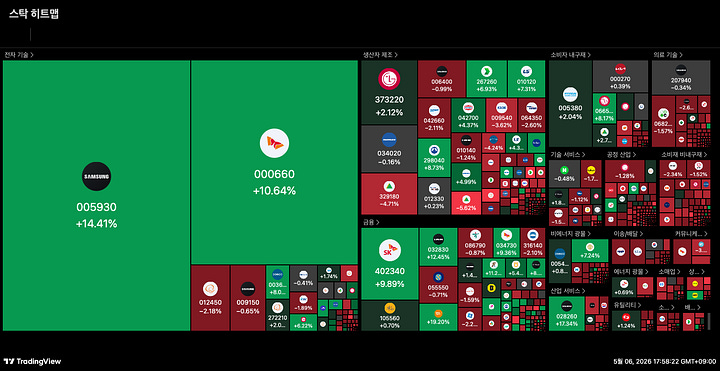

The Korean equity session on 2026-05-06 opened four hours after AMD released earnings. Institutional capital entered with a pre-established supply-side framework. Both major Korean memory suppliers had confirmed supply constraint conditions in the preceding weeks. SK Hynix Q1 2026 results, reported thirteen days prior, confirmed a record 72% operating margin. Management stated customer demand exceeded supply capacity. Samsung Electronics DS division reported record Q1 results on April 30. Its Q2 guidance cited planned response to new CPU memory demand in the second half. AMD’s earnings confirmed the demand side of a supply constraint both suppliers had already documented. Foreign institutional investors directed 3.01 trillion KRW into the Korean semiconductor sector, representing 96% of total foreign spot net buying. Samsung Electronics gained 14.41% and SK Hynix gained 10.64%. The KOSPI advanced 6.45% while the KOSDAQ declined 0.29%. Sector-level targeting, not broad risk appetite, produced the divergence.

The US session then priced the remaining supply chain layers. CPU design produced AMD at plus 18.62% and INTC at plus 4.49%. CPU architecture licensing produced ARM at plus 13.63%. Semiconductor equipment produced ASML at plus 7.06%. Foundry produced TSMC at plus 6.36%. Memory produced MU at plus 4.12%. Server assembly produced SMCI at plus 24.56%, DELL at plus 10.39%, and HPE at plus 1.08%. SMCI integrates AMD and Intel CPUs into AI servers for hyperscalers. It recorded the highest single-session gain among all seven layers and confirmed terminal layer transmission.

Evidence

Every supply chain infrastructure layer recorded gains. SOX advanced 4.48% to a record at 11,472.8. NVDA advanced 5.77%, reflecting concurrent GPU demand within the same session. The US 10-year Treasury yield declined 6.6 basis points to 4.352%, removing a valuation headwind. CBOE VIX held at 17.39 and MOVE at 76.78. Stable volatility confirmed genuine spot demand over options-driven momentum. Palantir Technologies declined 1.58%. Palantir sells AI software platforms. Its loss while AI hardware advanced is the primary discriminating signal between infrastructure phase positioning and generalized AI sentiment.

Outcome

The S&P 500 advanced 1.46% to 7,365.03. The Nasdaq 100 advanced 2.09% to 28,599.17. Russell 2000 advanced 1.47%, matching the S&P 500 within one basis point and confirming broad market participation.

3. The Structural Filter: Identifying the Mechanism Across Cycles

Filter 1: The Workload Composition Reclassification Signal

If a hardware vendor revises its addressable market growth rate upward by more than 50%, a workload shift is active. Capital begins migrating from the incumbent compute class to the challenger. The invalidation point is simultaneous acceleration in both classes without total capex expansion. That pattern indicates aggregate spending growth, not workload rotation.

Filter 2: The Cross-Timezone Propagation Signal

Foreign spot concentration above 90% in supply chain names, in the first open market after hardware earnings, confirms structural propagation. Broader index underperformance in the same session confirms supply chain tracking over sentiment. The invalidation point is broad index participation without supply chain concentration.

Filter 3: The Terminal Layer Divergence Signal

If the terminal assembly layer gains more than 15 percentage points above the application layer in a single session, the infrastructure phase dominates. Application software recording a loss while infrastructure gains confirms capital rotation. The invalidation point is application software revenue growth exceeding infrastructure hardware growth in two consecutive quarters. The regime inverts.

4. Regime Dynamics: The Lifecycle of the Mechanism

Regime Persistence

Infrastructure repricing regimes persist through three structural frictions. Forward procurement commitments, once made, lock supply chain participants into multi-quarter allocation cycles. Qualification cycles maintain pricing power above competitive equilibrium throughout deployment. Early adoption growth rates attract capital before competitive supply forms.

Regime Exhaustion

Two internal contradictions accumulate in every infrastructure phase. Supply investment resolves the scarcity generating premium pricing, weakening the positive feedback loop. Custom silicon captures workload share from general-purpose hardware as deployment scales. Systemic Exhaustion begins when both contradictions appear in consecutive reported periods.

Archival End-State

Three simultaneous conditions define the archival end-state. The terminal assembler reports declining order backlog for two consecutive quarters. The primary constrained component reports margins at commodity cycle levels. Application software revenue growth exceeds infrastructure hardware growth for two consecutive quarters. The regime inversion is confirmed. The capital allocation pattern then applies to the application layer. The sequence repeats.

“This content is for informational and educational purposes only and does not constitute financial, investment, tax, or legal advice. Past performance is not indicative of future results. All investments involve risk, including possible loss of principal. Consult a qualified advisor before investing. Author may hold positions in discussed securities.”

[System Audit: Pre-Market Thesis] The strategy established prior to the session.

Your framework is extremely strong on supply-chain propagation and infrastructure repricing dynamics. What I keep wondering, though, is whether AI may behave less like a classical industrial cycle and more like a recursive general-purpose technology.

In railways or traditional infrastructure, efficiency improvements mostly optimize an existing function. But with AI and robotics, each new qualitative capability seems to create entirely new layers of demand rather than simply normalizing the previous one. A robot that can fold laundry, cook, or navigate physical environments is not just a “faster model”; it requires different computational architectures, persistent inference, memory, sensing, coordination, and entirely new infrastructure layers.

So I wonder whether the transition from infrastructure phase to application phase may end up being far less sequential than in previous cycles, because the application layer itself continuously expands the infrastructure requirement. Your analysis is one of the clearest I’ve read on the current phase, which is why I’m very curious how you think about this recursive aspect of AI demand.